Management and controlling of data science projects

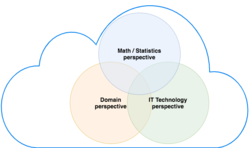

Data science aims to extract knowledge from data using structured analysis, which promises, among other things, improved performance for businesses and other organizations (Martinez 2017). For example, e-commerce companies such as Amazon are building product recommendation systems (recommenders) from user data to generate additional sales and respond quickly to customer behavior (Chen et al. 2012). Whether in politics, public safety, medicine, or geology, knowledge extracted from data can provide useful insights and progress in many sectors (Chen et al. 2012). Because of these numerous opportunities, as well as the increasing amount of data generated and collected every day, there is an increasing importance of this discipline. The fact that IT-based projects not infrequently fail completely or at least deliver their product late or in a more limited form underscores the requirement for appropriate project management for such projects (Aichele and Schönberger 2014, pp. 17-18). In particular, project controlling including the cost and benefit analysis of the whole represents essential parameters for the respective company when making decisions. Data Science projects, which are increasing in number due to the numerous application possibilities mentioned above (Martinez 2017), should be treated separately in this respect due to their special characteristics. This is underpinned, among others, by the development of reference models (e.g., CRISP-DM, ASUM-DM, TDSP) for the extraordinary DS process, which attempt to divide it into recurrent phases with defined tasks (Chapman et al. 2000; Provost and Fawcett 2013). This fact illustrates that DS projects warrant a special status even in the field of project management. However, existing approaches seem to be either not applied or by no means qualitatively sufficient, as according to one study, data science projects fail to deliver the desired result or fail at a high percentage (VentureBeat 2019). This is supported by Martinez et al. who identify, among other things, weaknesses in team and project management in data science projects (Martinez et al. 2021). The development of such a process model, which aims to eliminate these deficiencies, therefore promises to be relevant for various companies seeking to carry out such projects, as well as for the corresponding research fields.

With the help of existing process models from IT project management, IT controlling and DS literature, a template for project initiation and project controlling for Data Science applications/projects is to be developed, which can be used accordingly for applying companies or organizations and can contribute to improving the success rate of Data Science projects. For a concrete project planning it is first helpful to identify the general tasks in a Data Science project. This can be done with a literature analysis of existing process models in this field. With the help of this rough phase classification (business understanding, data collection and preparation, analysis procedures, evaluation, deployment and operation), the controlling and management-specific aspects of such a project are simplified, since the required resources can be derived directly from the individual tasks. For example, cost factors can be identified for the effort estimation for the respective tasks, for which comprehensible calculation instructions are then provided. Project management and project controlling templates are another component of the artifact. These are uniform document templates that are to be created for the various phases of the project. An example would be the reports describing the data, which provide detailed information about the data sets used in the Data Science project.

An evaluation of the artifact is done in a first step using publicly available application projects or use cases like predictive maintenance or fraud detection on common IT system landscapes (e.g. Google Cloud Platform). The KNIME Analytics Platform, an open source software for creating data science projects in the form of intuitive workflows, could also be used for this purpose.